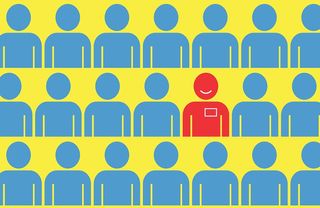

Cracking the 'Chicken and Egg' Dilemma: How Equitable Internships Can Propel Recent Graduates into Successful Careers

The founding director of the Partnership for Inclusive Innovation shares tips for promoting internships that are equitable and accessible to all.